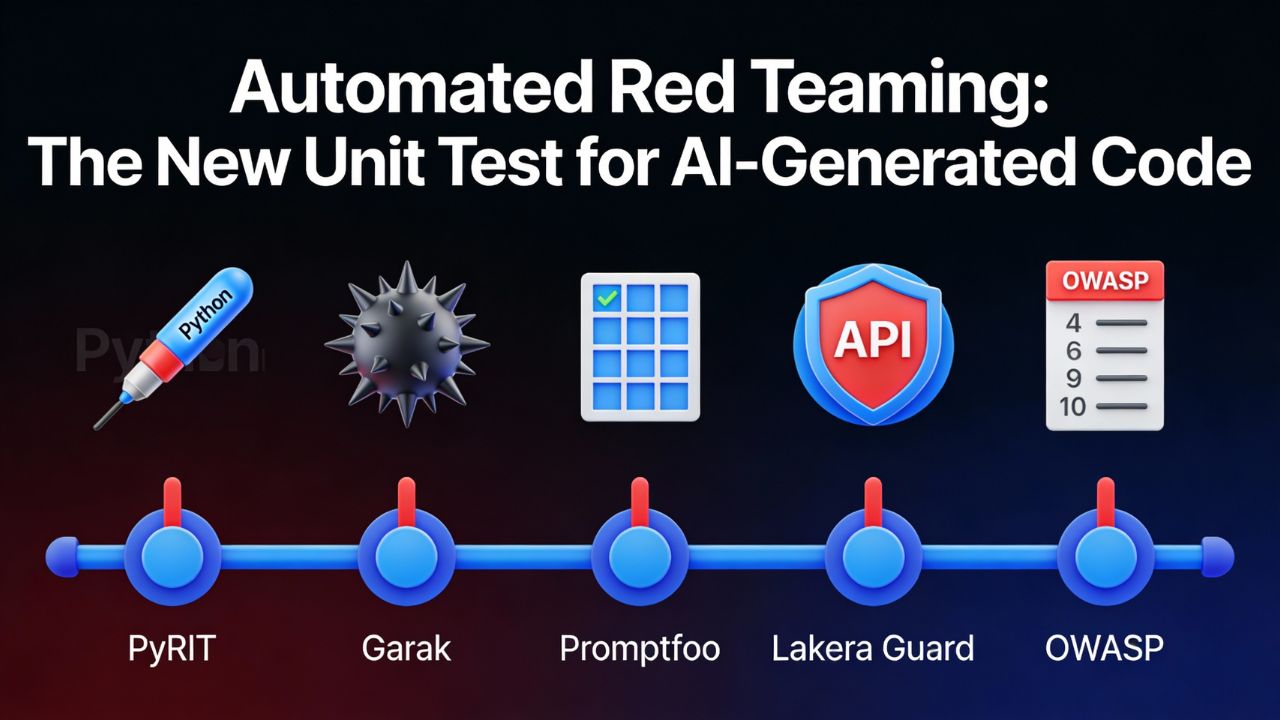

Automated Red Teaming: The New "Unit Test" for AI-Generated Code

If a Scrum team uses AI to write code, it also needs AI to break it before release. In 2026, red teaming stops being a one‑off penetration test and becomes an automated stage in the CI/CD pipeline that runs on every commit.

Tools like PyRIT, Garak, Promptfoo, and Lakera Guard let teams probe large language model features for OWASP LLM Top 10 risks as part of the Definition of Done instead of waiting for an annual assessment.

From Hacking Exercise to QA Practice

Traditional red teaming relied on expensive consultants running manual attack scenarios a few times per year, which left most day‑to‑day changes untested. Automated frameworks now let teams encode those scenarios as repeatable tests that execute whenever a pull request touches an AI‑powered workflow.

For Scrum teams, the mental model shifts from “we were red‑teamed last year” to “our AI story is not Done until it passes automated red‑team tests in the pipeline.”

Red Teaming Toolset for Agile Pipelines

The table below mirrors the DevAgentOps and copilot toolset style and focuses on how each automated red‑teaming tool behaves inside a real CI/CD pipeline rather than in a lab.

| Tool | Best Fit | Where It Runs in CI/CD | Agile & DoD Impact |

|---|---|---|---|

|

PyRIT Risk probes |

Teams building custom LLM apps on Azure or other clouds that need systematic security probing. | Orchestrates attack playbooks against LLM endpoints or agents from pipeline jobs or scheduled runs, using configurable scenarios that simulate prompt injection, data exfiltration, and policy bypass attempts. | Turns red teaming into a repeatable test suite so stories involving AI features must pass PyRIT runs before being marked Done in a Sprint backlog. |

|

Garak LLM fuzzing |

Teams that want broad, automated fuzzing of LLM behaviors across multiple providers and models. | Executes large batches of adversarial prompts against APIs or local models using plugins mapped to common LLM weaknesses, exporting structured reports that pipelines can treat as artifacts. | Supports the strategy “If your AI feature has not been red‑teamed by Garak, it is not Done” by making Garak status part of acceptance criteria. |

|

Promptfoo Deterministic tests |

Product teams needing deterministic, regression‑friendly LLM tests aligned with OWASP LLM Top 10 risks. | Runs prompt test suites as CLI jobs in Jenkins, GitHub Actions, or GitLab, with YAML‑defined scenarios and scoring functions that can be wired into quality gates. | Lets Scrum teams lock in “known good” behaviors so new prompts or model versions cannot regress without failing pipeline tests and blocking Story completion. |

|

Lakera Guard API guardrails |

Organizations needing runtime protection and policy enforcement on LLM APIs and chat endpoints. | Sits in front of LLM APIs as a policy engine, filtering prompts and responses for jailbreaks, sensitive data leakage, and unsafe content before they reach users or backends. | Extends the Definition of Done beyond static tests by requiring that production AI endpoints are fronted by guardrails enforcing organizational policies. |

|

OWASP LLM Top 10 Risk baseline |

Security and QA leaders standardizing on a shared vocabulary of LLM risks for testing and governance. | Provides a taxonomy of issues such as prompt injection, training data leakage, and model denial of service that can be encoded into Promptfoo suites, PyRIT scenarios, or Garak plugins. | Lets teams express DoD items as “no critical OWASP LLM Top 10 findings remain” rather than ad‑hoc lists, aligning backlog items and tests with an industry standard. |

PyRIT, Garak, and the Definition of Done

PyRIT is an open‑source framework for security risk identification in generative AI systems, designed to help engineers run structured probes instead of ad‑hoc experiments. It supports configurable attack scenarios and can be driven from CI jobs so tests execute whenever code or configuration changes.

Garak focuses on large‑scale probing of language models, using plugins to generate adversarial prompts that search for jailbreaks, data leakage, and other misbehaviors across multiple model backends. Together, they let teams say: “If this AI feature has not passed PyRIT and Garak runs, it is not Done.”

Making Red Teaming a Scrum Habit

- Add a DoD item to AI‑related stories: “PyRIT and Garak suites pass with no high‑severity findings for this feature or its prompts.”

- Trigger PyRIT and Garak jobs on pull requests that touch prompts, retrieval chains, or safety policies, not just application code.

- Expose PyRIT and Garak results on Sprint boards so product owners can see security test status alongside unit and integration tests.

Promptfoo for Deterministic LLM Regression Testing

Promptfoo provides a CLI and configuration format for running repeatable LLM tests, including red‑team style prompts mapped to OWASP LLM Top 10 categories. Teams define inputs, expected behaviors, and scoring functions, then run suites as part of their regular CI jobs.

Because tests are deterministic and versioned in git, Promptfoo is well suited to regression testing where a model upgrade or prompt refactor should not silently reintroduce vulnerabilities that were previously caught.

Integrating Promptfoo into Jenkins or GitHub Actions

- Store Promptfoo configuration under a `/red-team-tests` or `/llm-tests` directory in the repo so changes are reviewed like code.

- Add a Jenkins stage or GitHub Actions job that runs `promptfoo test` and fails the pipeline when scores fall below thresholds tied to OWASP risk levels.

- Use result exports to generate trend charts showing how often AI stories fail red‑team tests across Sprints, making risk visible to stakeholders.

Lakera Guard as the Runtime Safety Net

While PyRIT, Garak, and Promptfoo focus on pre‑deployment testing, Lakera Guard adds a runtime safety layer that inspects prompts and responses via an API. It can block or modify requests that violate policies such as sensitive data exposure, jailbreak attempts, or abusive content.

Integrating Lakera Guard into the same CI/CD pipelines that deploy LLM services ensures environments are consistently configured, and policies can be managed as code alongside application changes.

Continuous Red Teaming in the Pipeline

- Deploy Lakera Guard proxies or middleware as part of infrastructure‑as‑code so every AI endpoint inherits the same protections by default.

- Feed blocked requests and violations back into PyRIT, Garak, or Promptfoo suites to expand automated red‑team coverage over time.

- Track incidents against OWASP LLM Top 10 categories to confirm that automated tests are reducing real runtime issues across Sprints.

Automated Red Teaming as a True "Unit Test"

For most AI features, the smallest meaningful unit of behavior is not a single function, but a prompt‑plus‑policy combination, so unit tests must exercise that combination rather than only application code. Automated red‑team frameworks let developers treat LLM behaviors as testable units with clear pass or fail outcomes.

When PyRIT, Garak, Promptfoo, and Lakera Guard are wired into CI/CD, the Definition of Done becomes measurable: no critical OWASP LLM Top 10 issues, tests passing on every commit, and guardrails enforcing policy in production, all without waiting for a separate security gate at the end.

Already working on DevAgentOps and AI security? Read these related guides: DevAgentOps 2026 – The Agile Guide to Autonomous Security and Red Teaming and Choosing a Security Copilot for Your Pipeline: Microsoft vs. Google vs. CrowdStrike in CI/CD .

FAQ: Automated Red Teaming

Q: What is automated red teaming for AI-generated code?

A: Automated red teaming uses tools such as PyRIT, Garak, and Promptfoo to continuously probe LLM-based features for vulnerabilities as part of CI/CD pipelines, instead of relying only on occasional manual penetration tests.

Q: How can PyRIT be integrated into a Jenkins or GitHub Actions pipeline?

A: PyRIT can be installed in pipeline jobs and configured with attack playbooks that target LLM endpoints or agents. CI stages run PyRIT scenarios on each relevant commit and fail the build when high-severity risks are detected.

Q: What is the difference between Garak and Promptfoo for agile teams?

A: Garak focuses on large-scale fuzzing and adversarial probing of LLMs across providers, while Promptfoo provides deterministic, regression-friendly test suites with expected outcomes. Many teams run Garak for broad discovery and Promptfoo for ongoing regression testing.

Q: How do automated red teaming tools map to the OWASP LLM Top 10?

A: The OWASP LLM Top 10 defines categories such as prompt injection and training data leakage. Frameworks like PyRIT, Garak, and Promptfoo offer test cases and plugins aligned to these categories so teams can measure coverage and express Definition of Done items against the standard.

Q: What role does Lakera Guard play compared to PyRIT or Promptfoo?

A: Lakera Guard enforces safety and data protection policies at runtime by filtering prompts and responses through an API. It complements offline testing tools like PyRIT and Promptfoo by blocking jailbreaks, sensitive data leaks, and unsafe content in production.

Sources and References

- GitHub – PyRIT: Python Risk Identification Tool for generative AI

- PyRIT Documentation

- Help Net Security – PyRIT open‑source framework to find risks in generative AI systems

- Arxiv – Garak: A Framework for Security Probing Large Language Models

- GBHackers – Garak Open Source LLM Vulnerability Scanner for AI Red‑Teaming

- Promptfoo – OWASP LLM Top 10 Red‑Team Testing Guide

- Promptfoo – OWASP Top 10 LLM Security Risks TLDR

- Lakera Guard – Integration Guide

- OWASP – Top 10 for Large Language Model Applications