Why Your enterprise ai agent orchestration Will Fail (And How Sprint Planning Fixes It)

Key Takeaways

- Deploying a single bot is easy, but without enterprise ai agent orchestration, scaling multiple agents results in cascading system failures and untrackable chaos.

- Merely deploying internal enterprise ai agents without an operational framework destroys ROI.

- Effective orchestration relies on a "Supervisor Agent" hierarchy to delegate tasks and resolve conflicts between subordinate AI models.

- You must radically adapt Agile methodologies: Sprint planning for AI agents requires defining strict "Agentic Definitions of Done" and API token budgets.

- Integrating FinOps into your orchestration layer is non-negotiable to prevent infinite logic loops that drain your IT budget.

Deploying one AI agent is a neat trick; deploying fifty creates untrackable chaos.

Many technical leaders assume that once they finish building internal enterprise ai agents, the hard work is over.

They deploy these autonomous workers into their repositories and expect immediate, frictionless productivity. This is a massive miscalculation. Siloed bots destroy ROI.

When multiple agents interact with the same codebase, APIs, or databases without a centralized traffic controller, they overwrite each other's work and trigger infinite conflict loops.

Introduction: The Multi-Agent Chaos

Without proper enterprise ai agent orchestration, your scaling efforts will collapse.

Worse, a decentralized multi-agent system quickly becomes a ticking compliance time bomb. To fix this, modern Product Managers and Engineering Leads must fuse technical orchestration with operational Agile frameworks.

This deep dive reveals why your current AI deployment is set up to fail, and exactly how to implement Sprint Planning for AI agents to bring order to the chaos.

The Anatomy of enterprise ai agent orchestration

What happens when your QA agent rejects the code written by your Developer agent, but the Developer agent lacks the context to understand why?

They get stuck in a loop. This is the core problem that enterprise ai agent orchestration solves.

It is not just about connecting APIs; it is about establishing a chain of command and communication protocols between autonomous entities.

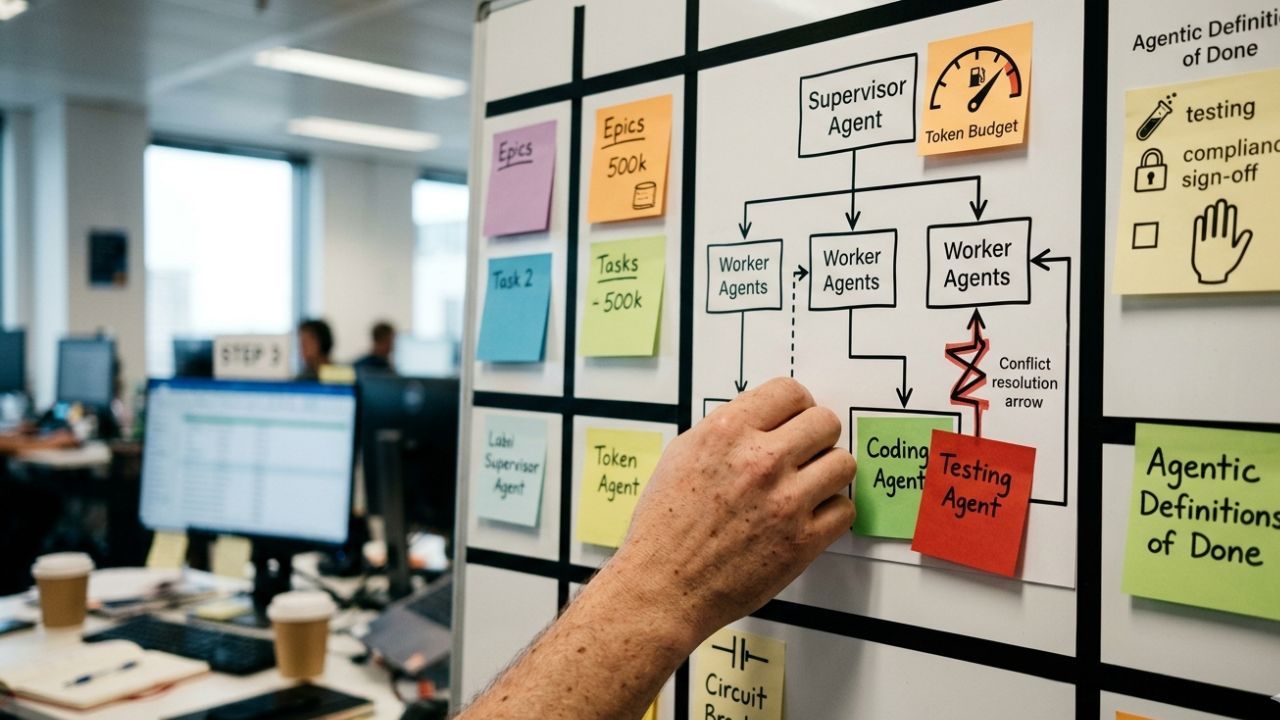

The Supervisor Agent Hierarchy

The most effective orchestration model is hierarchical. You do not let fifty agents operate as peers.

- The Supervisor Agent: This is the AI equivalent of a Scrum Master or Lead Engineer. It receives the overarching goal from human operators.

- The Worker Agents: The Supervisor breaks the goal down and delegates subtasks to specialized Worker Agents (e.g., a Data Retrieval Agent, a Coding Agent, a Testing Agent).

- The Review Protocol: Worker agents report back to the Supervisor. If a task fails, the Supervisor analyzes the error and re-routes the task or flags a human engineer.

This hierarchy ensures that conflicts are resolved centrally, rather than allowing worker agents to endlessly battle over identical repository files.

How to do Sprint Planning for AI Agents

You cannot manage a Supervisor Agent using traditional Agile story points. AI does not experience fatigue in the same way humans do, but it is constrained by API rate limits, token windows, and logic thresholds.

To prevent orchestration failure, you must adapt your Sprint Planning ceremonies.

1. Shift from 'Story Points' to 'Compute Budgets'

During Sprint Planning, Product Managers usually assign Fibonacci sequence numbers to estimate human effort.

For AI agents, effort is measured in compute and tokens. When adding an issue to the Supervisor Agent's backlog, you must define a hard stop.

"Agent is authorized to spend up to 500,000 tokens on this refactoring task before requiring human intervention."

2. Establish Agentic Definitions of Done (DoD)

An AI agent will claim a task is "done" the moment its script runs without throwing a fatal error. Your Sprint Planning must outline a stricter DoD.

- Has the code passed the CI/CD automated testing suite?

- Has the Security Compliance Agent signed off on the pull request?

- Has the human-in-the-loop (HITL) reviewed the architectural choices?

3. Plan for Asynchronous Bottlenecks

Orchestrated agents work at lightspeed compared to human engineers, which creates a massive backlog of Pull Requests.

Your Sprint Planning must allocate heavier human capacity to Code Review rather than Code Writing.

If your human engineers are too busy to review the AI's output, the entire orchestration pipeline stalls.

Guarding Against the FinOps Disaster

One of the primary reasons un-orchestrated AI deployments fail is the hidden cost.

An autonomous AI agent caught in a logic loop—trying and failing to hit a broken internal API—can burn through your monthly IT budget in 45 minutes.

This is where your orchestration layer intersects with managing ai agent api costs.

Your Supervisor Agent must be programmed with strict FinOps thresholds.

During the Sprint Planning phase, every Epic assigned to the AI must include a financial circuit breaker. If an agent fails to resolve a conflict within three iterations, the orchestrator must freeze the process and alert a human.

Resolving Multi-Agent Conflicts in Production

Even with perfect Sprint Planning and budget limits, autonomous agents will inevitably clash.

Imagine two agents tasked with optimizing database queries. Agent A adds an index to speed up read times, while Agent B deletes that same index to optimize write times.

Implementing the Conflict Resolution Matrix

- Deterministic Rules: Your orchestration framework must contain hardcoded, non-AI logic that prioritizes certain actions. (e.g., "Security agent overrides Performance agent").

- State Management: Use frameworks like LangGraph to maintain a persistent memory "state" for the entire multi-agent system. If Agent B knows why Agent A made a change, it is less likely to overwrite it blindly.

- Human-in-the-Loop Escalation: When the Supervisor Agent detects a stalemate between two Worker Agents, it halts execution. It packages the context of the disagreement and flags the human Product Manager for a final decision.

Conclusion

Building AI bots is a software engineering problem; managing them is an organizational challenge.

If you ignore the necessity of enterprise ai agent orchestration, your autonomous workforce will quickly devolve into an expensive, chaotic liability.

To truly harness the ROI of generative AI, technical leaders must move beyond the codebase and focus on the operational framework.

By integrating strict Supervisor Agent hierarchies, managing your API token budgets, and redefining your Agile Sprint Planning ceremonies for an asynchronous workforce, you can scale your AI initiatives securely.

Stop letting siloed bots destroy your IT budget. Build the orchestration layer, plan your AI sprints with precision, and take control of your enterprise architecture today.

Frequently Asked Questions (FAQ)

What is enterprise ai agent orchestration?

It is the centralized framework and set of protocols used to manage, coordinate, and monitor multiple autonomous AI agents within a business environment, ensuring they work collaboratively toward unified goals without conflict.

Why is orchestration necessary for multi-agent systems?

Without orchestration, individual agents operate in silos. This leads to redundant work, API conflicts, code overwrites, and unmanageable IT costs. Orchestration aligns their actions and maintains system stability.

What is a supervisor agent in an orchestration hierarchy?

A supervisor agent acts as the project manager for other AI models. Instead of doing the raw technical work, it interprets human prompts, delegates subtasks to specialized worker agents, and reviews their output for quality and consistency.

How do you resolve conflicts between autonomous agents?

Conflicts are resolved through a centralized orchestrator using deterministic rules (e.g., prioritizing security protocols over speed), shared memory states to provide context, and escalating unresolvable loops to human engineers.

How do you handle failure states in a multi-agent system?

When a worker agent fails, the orchestrator prevents a system crash by logging the error, analyzing the failure context, and either re-prompting the agent with corrected parameters or freezing the workflow for human intervention.