How to Build a Custom GPT for Scrum (Without Leaking Data)

Key Takeaways

- Public LLMs are a security nightmare: Pasting proprietary sprint data into public AI models is a massive corporate data leak waiting to happen.

- Enterprise boundaries are mandatory: You must utilize secure, zero-data-retention environments to protect your intellectual property.

- Context is everything: A generic AI cannot coach your team. You must learn how to build a custom gpt for scrum that understands your specific Definition of Done.

- Automate without exposing IP: By securely connecting your agile tracking software via protected APIs, you can automate reporting without risking code leaks.

- Own your workflow: A dedicated, custom agile AI assistant acts as a tireless co-facilitator, reclaiming hours of administrative overhead.

Are you pasting proprietary sprint data into public ChatGPT? Stop immediately. Every time a well-meaning Scrum Master copies a poorly defined user story or a sensitive bug report into a public Large Language Model (LLM), they are inadvertently training a global database on their company’s intellectual property.

As we established in our foundational guide, AI Scrum Master: Why Manual Agile Coaching Is Dead, the future belongs to facilitators who leverage AI. However, that leverage cannot come at the expense of enterprise security.

To safely harness the power of artificial intelligence, you cannot rely on off-the-shelf consumer chatbots. You need a dedicated agile AI assistant that lives strictly within your company's secure firewall.

This comprehensive guide will show you exactly how to build a custom gpt for scrum so you can automate your agile ceremonies without ever compromising your proprietary data.

The Shadow AI Crisis in Agile Environments

The rapid adoption of AI has created a phenomenon known as "Shadow AI." This occurs when agile teams, desperate to increase their velocity, bypass IT security to use unauthorized generative AI tools.

The Danger of Public Training Sets

When you use a free or standard consumer tier of an LLM, your inputs are typically logged and used to train future iterations of that model.

If your team is discussing a critical vulnerability in your payment gateway during a sprint retrospective, and you feed those notes into a public chatbot to generate action items, that vulnerability is now part of an external server.

This is a catastrophic violation of zero-trust enterprise security protocols.

Why Generic AI Fails the Scrum Framework

Beyond the severe security risks, generic AI is simply bad at enterprise Scrum. It does not know your team's historical velocity, your unique product architecture, or your specific Jira workflows.

Without deep, contextual knowledge of your Agile Release Train, the AI will generate useless, cookie-cutter advice.

To get real value, you must build secure GPTs for enterprise scrum. This ensures the model's outputs are deeply aligned with your actual operational reality.

Step-by-Step: How to Build a Custom GPT for Scrum

Building your own AI assistant does not require you to be a machine learning engineer. However, it does require strict adherence to data governance and prompt engineering best practices.

Here is the blueprint for how to build a custom gpt for scrum.

Phase 1: Establish the Secure Environment

Do not use the consumer version of ChatGPT. To build a secure assistant, you must utilize an enterprise-grade environment that guarantees a "zero data retention" policy.

Approved Enterprise Environments:

- OpenAI Enterprise / Team Workspaces: These tiers explicitly state that your data, prompts, and uploaded files are excluded from model training.

- Azure OpenAI Service: This provides a private, isolated instance of the LLM hosted entirely within your company's existing Microsoft Azure cloud infrastructure.

- AWS Bedrock or Google Cloud Vertex AI: Similar to Azure, these platforms allow you to deploy models securely within your VPC (Virtual Private Cloud).

Once you have secured the proper licensing, you can begin configuring the custom instructions.

Phase 2: Architecting the System Prompt

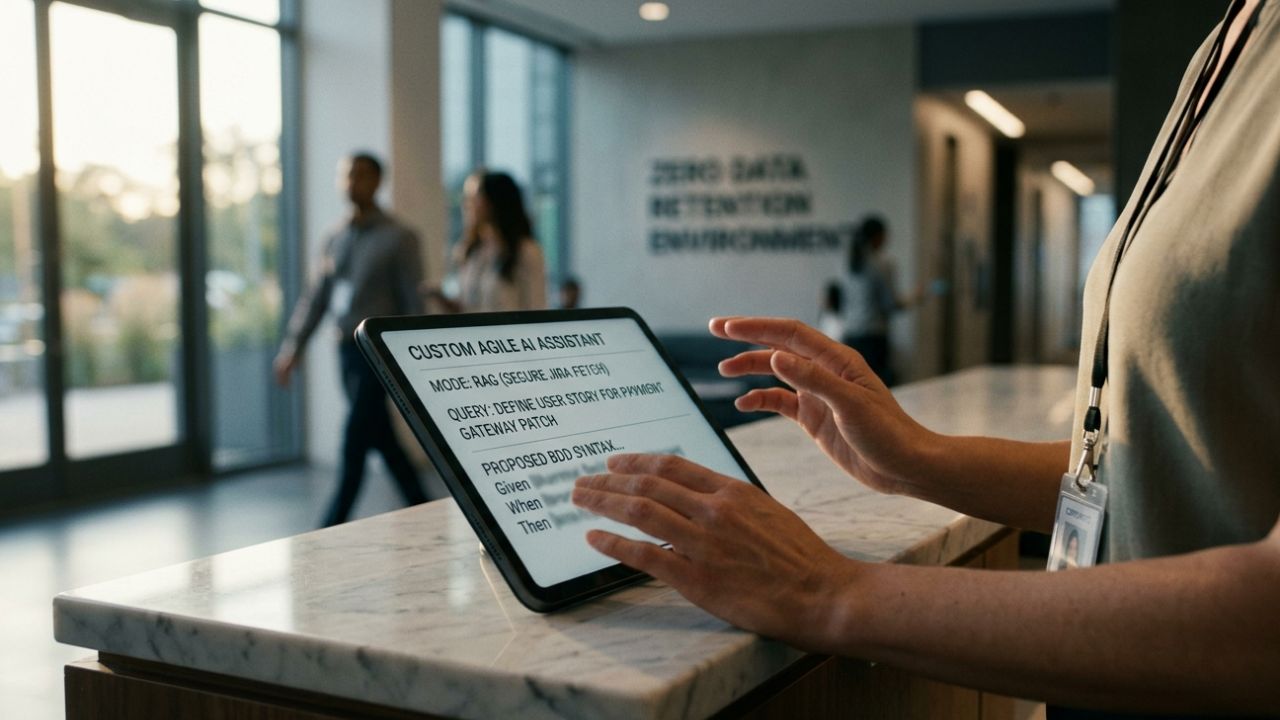

The "System Prompt" is the foundational brain of your custom GPT. It dictates the AI's persona, its rules of engagement, and its absolute constraints.

A weak system prompt yields a weak Scrum Master. Your prompt must be highly technical and incredibly rigid.

Example System Prompt Framework:

- The Persona: "You are a Senior Enterprise Scrum Master and Agile Coach. You strictly adhere to the 2020 Scrum Guide."

- The Constraint: "You will never invent data. If you are asked to estimate a story point without historical context, you will refuse and ask for the team's historical velocity."

- The Output Format: "All generated user stories must strictly follow the Behavior-Driven Development (BDD) Gherkin syntax (Given/When/Then)."

Phase 3: Training an LLM on Jira Data Safely

A custom GPT is only as smart as the context it is given. To make your assistant truly useful, it needs to understand your backlog. But training an LLM on Jira data must be done through secure integrations.

Do not manually export CSVs of your entire backlog. Instead, use secure API webhooks or native integrations provided by your enterprise platform.

This is where many teams make fatal errors. We highly recommend reading our guide on Why 90% of AI Agile Tools Will Ruin Your Scaled Agile to understand the risks of connecting unvetted third-party plugins to your Jira instance.

Use RAG (Retrieval-Augmented Generation) architecture. This allows your secure GPT to "read" the Jira tickets in real-time to answer your questions, without actually absorbing the proprietary code into its core training weights.

Deploying Your Dedicated Agile AI Assistant

Once your secure, custom GPT is built, you must integrate it into your daily agile ceremonies. A dedicated agile AI assistant is a force multiplier for a Scrum Master's daily workflow.

Automating Backlog Refinement

Prior to a refinement session, the Product Owner can ping the custom GPT with a rough feature idea.

Because the GPT already knows your Definition of Done and your architectural constraints via its system prompt, it can instantly translate a one-sentence feature request into a fully fleshed-out epic.

It will flag missing acceptance criteria and automatically generate QA testing parameters before the developers even see the ticket.

Real-Time Flow Diagnostics

During the active sprint, you can ask your custom GPT to analyze the current state of the board.

By feeding it the active burndown metrics (via your secure API), the AI can instantly identify bottlenecks.

It will notice if a ticket has been stuck in "Code Review" for three days and automatically suggest a swarming strategy based on the specific developers' past resolution times.

Objective Retrospective Analysis

Humans suffer from recency bias; we only remember the things that went wrong in the last 48 hours of a two-week sprint.

Your custom GPT remembers everything. By feeding it the anonymized sprint logs, it can identify systemic trends across multiple sprints, providing objective, data-driven insights for your retrospective that humans simply cannot see.

Frequently Asked Questions (FAQ)

What is a custom agile AI assistant?

A custom agile AI assistant is a heavily configured Large Language Model deployed within a secure enterprise firewall. It is explicitly programmed with strict system prompts to act as a Scrum Master, natively understanding your specific product architecture, agile frameworks, and team definitions of done without exposing data.

How do you build a custom GPT for Scrum securely?

To build it securely, you must bypass consumer-grade AI tools entirely. You initiate an enterprise workspace (like Azure OpenAI or ChatGPT Enterprise) that enforces zero-data-retention policies. You then configure custom system prompts and connect your project management tools using secure, heavily authenticated APIs to prevent data leakage.

Can I train an LLM on my proprietary Jira data?

Yes, but you should not technically "train" the base model on it. Instead, you use Retrieval-Augmented Generation (RAG). This allows the secure enterprise LLM to temporarily fetch and read your Jira data to answer a specific query, discarding the sensitive proprietary information immediately after generating the response.

Why shouldn't I use public ChatGPT for Scrum events?

Using public LLMs for Scrum events is a critical cybersecurity risk. Public models ingest your inputs to train future algorithms. If you paste proprietary source code, confidential product roadmaps, or sensitive bug reports into a public chat, you are actively leaking your company's intellectual property to external servers.

Conclusion

The divide between high-performing agile teams and legacy operations is widening rapidly. You can no longer afford to run manual, intuition-based sprints.

But equally, you cannot risk your company's intellectual property on unvetted, public AI models.

By mastering how to build a custom gpt for scrum, you bridge this gap. You create a secure, hyper-intelligent co-pilot that understands your team's exact cadence, enforces your agile framework, and accelerates your delivery pipeline.

Stop relying on shadow AI, secure your enterprise environment, and start engineering your customized agile future today.

Sources & References

- Gartner, Inc. "Mitigating the Risks of Shadow AI in Enterprise Software Development." Gartner Research, 2025.

- OWASP Foundation. "Top 10 for Large Language Model Applications: Data Privacy and Data Leakage." OWASP Security Projects, 2024.

- Atlassian. "Enterprise Data Governance and AI Integration in Agile Teams." Atlassian Work Life Blog, 2025.